An agent chat turned into a skill, and then it QA'd itself

I had a simple index.html page, a marketing site for a client in Spanish.

They asked an English version too, so I opened the chat to Claude’s Opus 4.5 model and asked it to create a near-identical en/index.html with content translated.

Easy peasy.

I opened both files side by side and skimmed, they looked the same with lines in different languages. But I’m used to testing changes, and agents find things to fix before I start more careful checking. While I had this thought, the client recalled they’d need a version in Portuguese as well. “Sure”.

Now, would they synchronize updates in Spanish to the other two languages? Content and design will surely drift.

The solution

I used Claude’s /skill-creator

to build reusable instructions to check parity across all languages. My simple prompt:

Create a skill to check that the index files read well in all languages (Spanish and English, Portuguese upcoming). Check translations match, no page has content that the other does not, and that markup and UI looks and behaves the same.

The agent started writing a Python script to check:

- DOM structure is identical after normalizing expected differences (like the language switcher)

- Texts that should be translated aren’t identical across files

- Same images, same order

- Same stylesheets

- Internal consistency (nav anchors to section IDs,

<html lang>attribute)

I expected hand-wavy, maybe helpful browser-based checks. It wrote a deterministic Python script.

A noisy first run

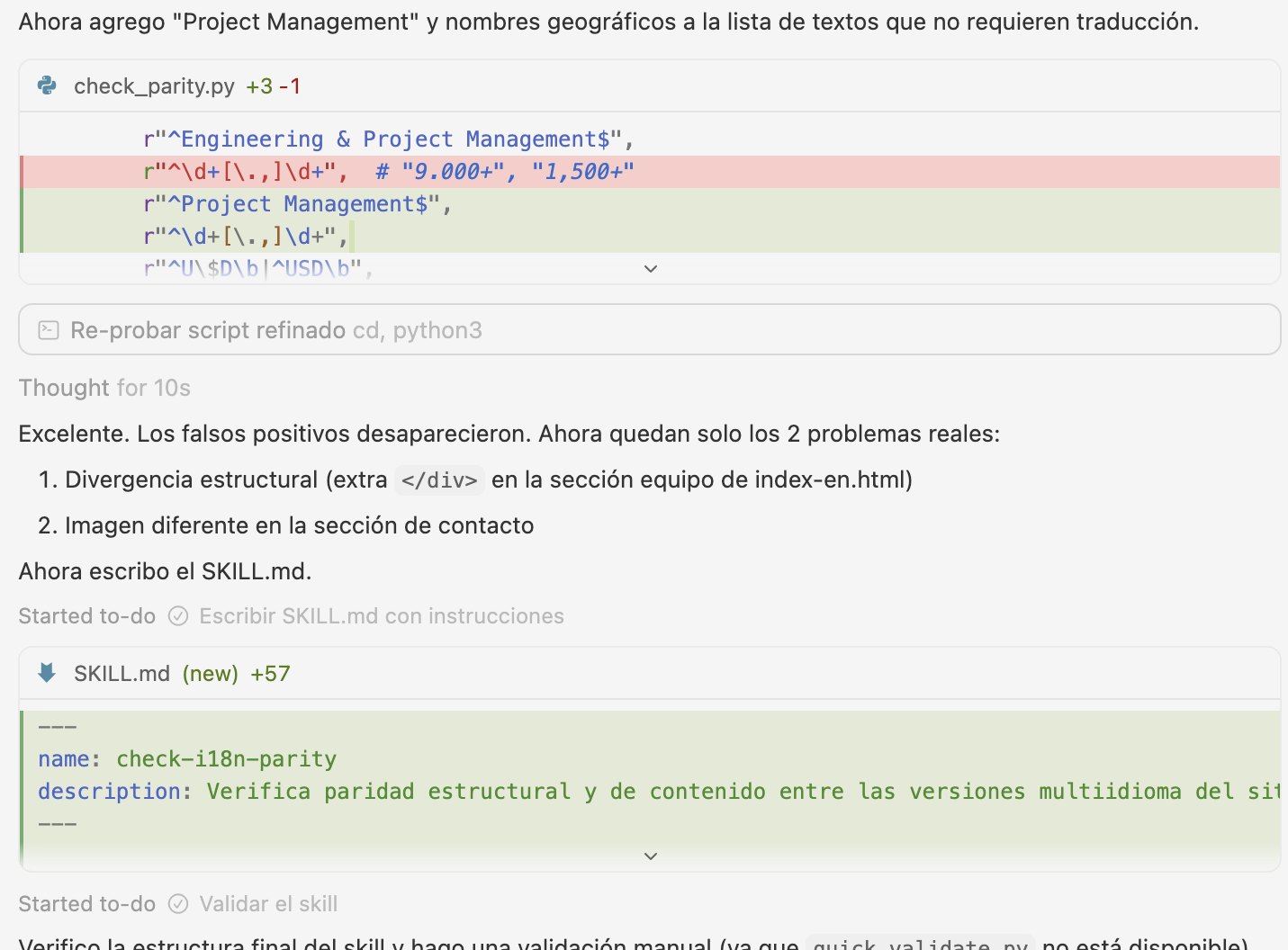

I observed its thought process; it started flagging dozens of mismatches.

Most of them cascaded from a single root cause: an extra closing </div> in the English file

threw off every structural comparison after it. The output was technically correct, but not helpful as a checklist.

It iterated: it identified the root divergence pattern, filtered expected differences (the language switcher, text that’s legitimately identical across languages like proper nouns or acronyms), and re-ran until the output was clean. It also flagged actual issues it had found while building its skill:

The false positives disappeared. Now only the 2 real problems remain.

Two real bugs I’d missed

The extra </div> was a copy-paste error (that didn’t break the UI and I hadn’t noticed).

And an image at the footer had an old path I hadn’t finished migrating.

The skill caught them while testing itself.

Let’s add Portuguese now

When I added pt/index.html, the parity script immediately had a new problem: Spanish and Portuguese share too much vocabulary.

“Engenharia” and “Ingeniería” are different enough, but dozens of short terms like proper nouns or abbreviations are identical.

The script was flagging legitimate cognates as missing translations.

Opus taught the script about language proximity: it introduced a CLOSE_LANG_PAIRS to relate Spanish and Portuguese,

identical text under 30 characters is assumed to be a legitimate cognate and skipped.

For distant pairs (like Spanish and English), any identical text is still suspicious.

That was creative. A human may get there after a while. The agent got there in a chat that turned into an effective, reusable skill.

Closing thoughts

Internationalizing projects used to be complicated. I asked for English and then Portuguese versions, and

I got a system where adding a new language is a tool call and a proof read away.

A system specific to this one project, with its single index.html copied over

in language subdirectories, because it’s simple, and with LLMs it’s now easy to maintain.

Testing the skill on real files caught bugs in the HTML. Adding a third language taught the skill to handle distant and proximate languages. Each step improved either the thing being checked or the thing doing the checking.